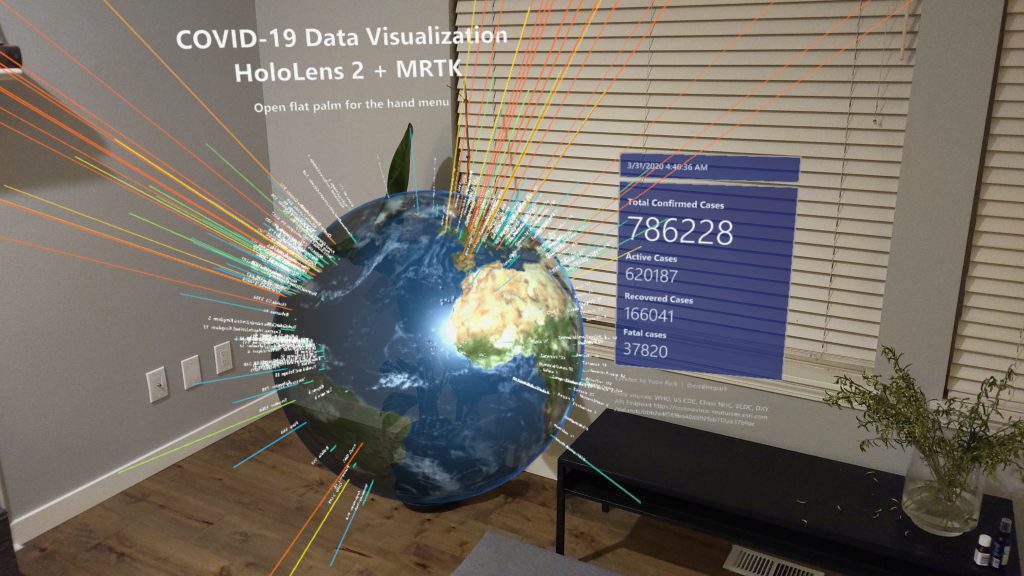

This is my personal experimental project for HoloLens 2, made with MRTK. This project explores how volumetric data can be represented in physical space with direct hand-tracking inputs in mixed reality using HoloLens 2. It is based on Microsoft’s MRTK(Microsoft’s Mixed Reality Toolkit)

The project works with HoloLens 1st gen as well. (use static menu instead of hand menu)

GitHub

I have published the Unity project on GitHub:

https://github.com/cre8ivepark/COVID19DataVisualizationHoloLens2

Data source

ESRI’s Coronavirus COVID-19 Cases feature layer

https://coronavirus-resources.esri.com/datasets/bbb2e4f589ba40d692fab712ae37b9ac

Project

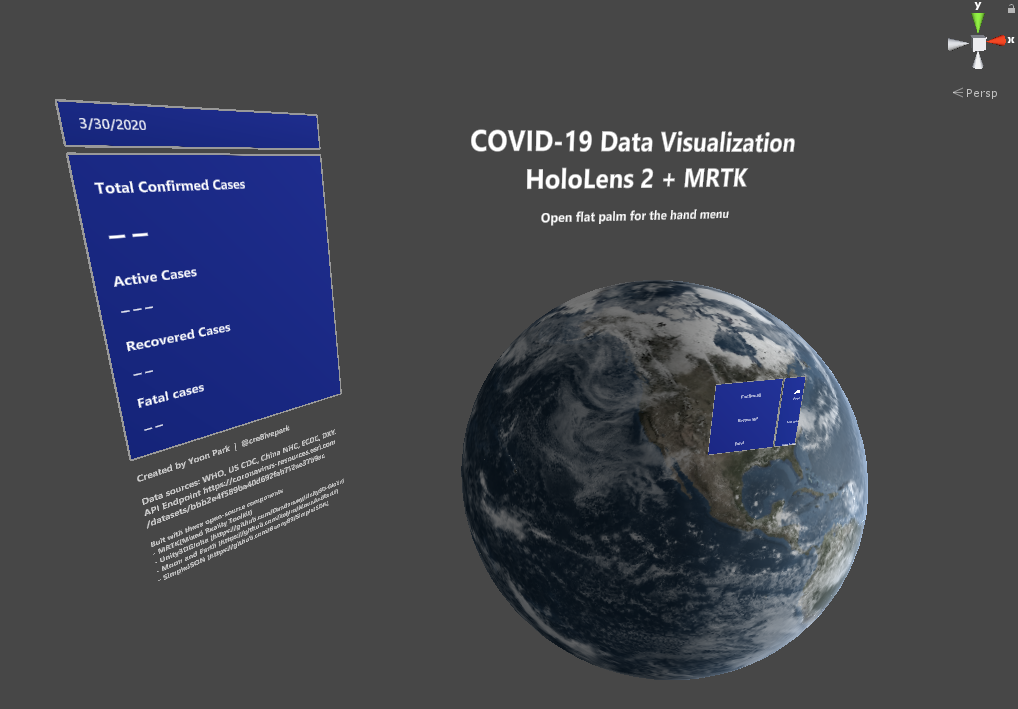

DataVisualizer.cs script contains the code for retrieving, parsing JSON data, and visualizing with graphs. Graph el

DataVisualizer.cs script contains the code for retrieving, parsing JSON data, and visualizing with graphs. Graph elements are added to GraphContainerConfirmed, GraphContainerRecovered, GraphContainerFatal, and LabelContainer. CreateMeshes() creates the graph for three data values and text label. Main menu’s Radio buttons simply show/hide GraphContainer objects.

The menu stays around the user with tag-along behavior which is provided by MRTK’s RadialView solver. Using the pin button, you can toggle tag-along behavior. The menu’s backplate can be grabbed and moved. Grabbing and moving the menu automatically disables the tag-along and makes the menu world-locked.

The menu’s toggle button shows & hides GraphContainer and LabelContainer. Use slider UI for configuring the earth rendering options.

- Earth’s color saturation level

- Cloud opacity

- Sea color saturation level

Build and deploy

Please make sure to use ‘Single Pass’ (not Single Pass Instanced) to render graph properly on the device.

Built with these open-source components

I was able to create this project using several amazing open-source projects:

- MRTK(Mixed Reality Toolkit) (http://aka.ms/MRTK)

- Unity3DGlobe (https://github.com/Dandarawy/Unity3D-Globe)

- Moon and Earth (https://github.com/keijiro/MoonAndEarth)

- SimpleJSON (https://github.com/Bunny83/SimpleJSON)

Features

- Near interactions with direct grab/move/rotate (one or two-handed)

- Far interactions using hand ray (one or two-handed)

- Main menu to switch the data, change earth rendering options

- Toggle graph, text label

- Toggle auto-rotate

Known issues

- Data normalization & polish needed

- Sometimes two-handed manipulation makes the globe tiny and not properly scalable. Use hand-ray to make it bigger again.

Other Stories

-

홀로렌즈2용 타입 인 스페이스 (Type In Space) 앱 디자인 스토리

Background As a designer passionate about typography and spatial computing, I’ve long been captivated by […]

-

MRTK 2 – How to build crucial Spatial Interactions

Learn how to use MRTK to achieve some of the most widely used common interaction […]

-

How to use Meta Quest 2/Quest Pro with MRTK3 Unity for Hand Interactions

With the support of OpenXR, it is now easy to use MRTK with Meta Quest […]

-

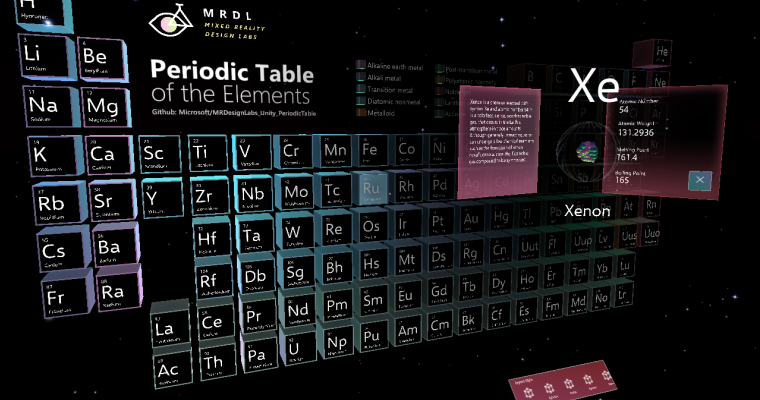

Bringing the Periodic Table of the Elements app to HoloLens 2 with MRTK v2

Sharing the story of updating HoloLens app made with HoloToolkit(HTK) to use new Mixed Reality […]